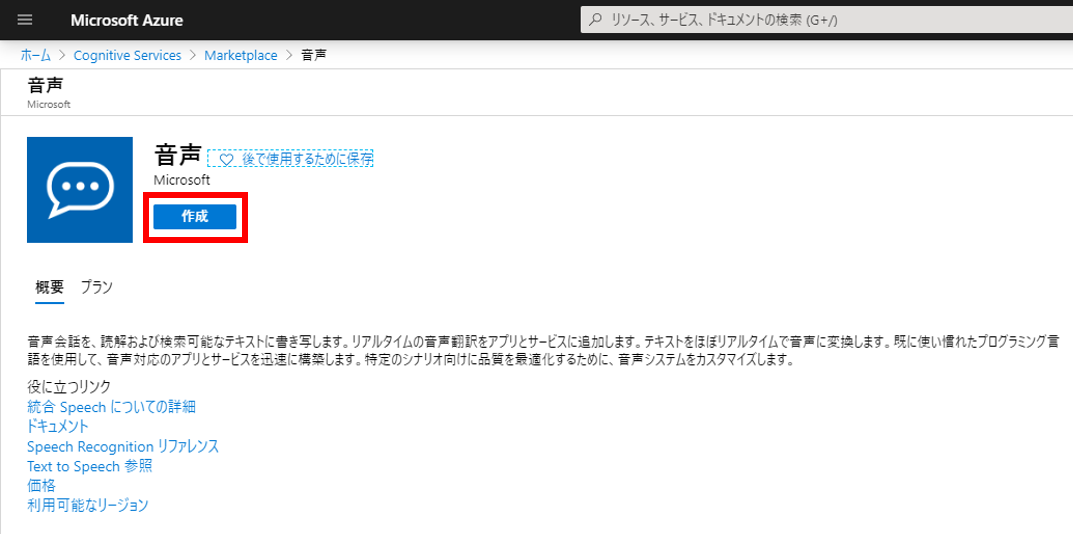

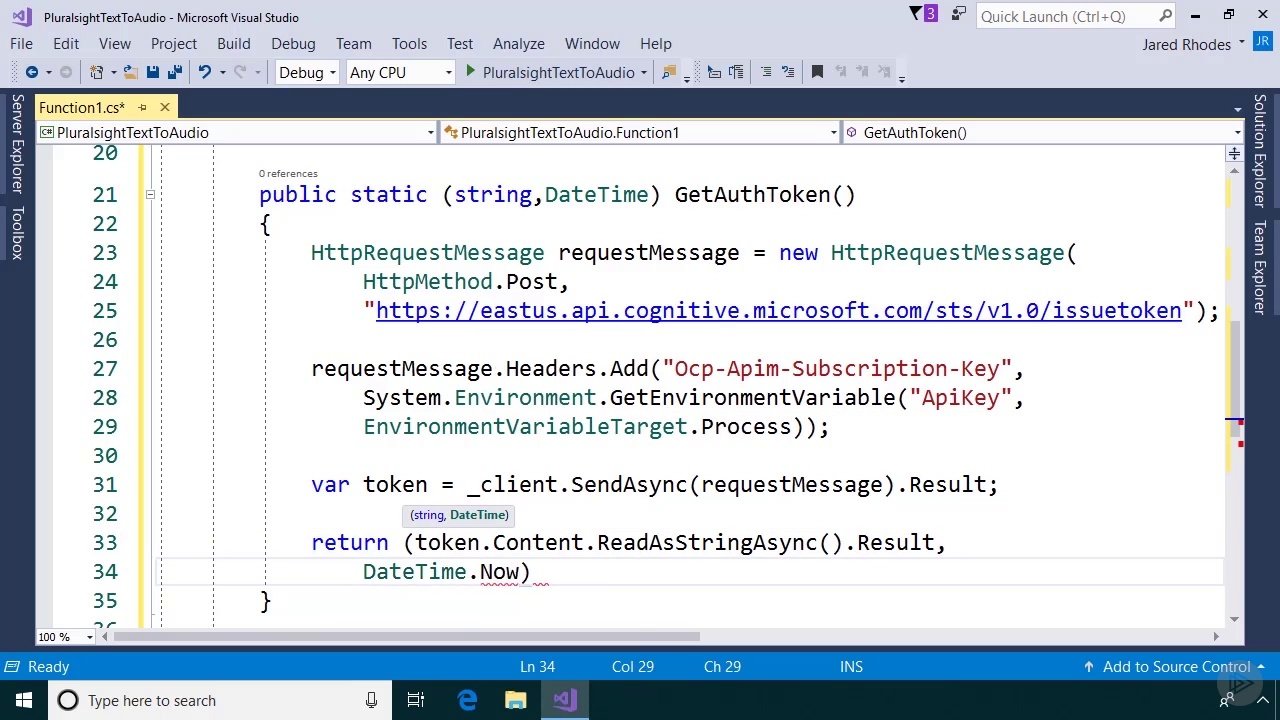

You can call the services by using SDKs in various languages, or even through REST calls. Azure Cognitive Services is a collection of callable AI services which can be incorporated into any application. This is where Azure Cognitive Services comes into play. But quite frequently we can use a service created by someone else, someone smarter than we are, whose sole job is to create the best solution possible for the one specific problem. So, if there are others doing it, why build something by ourselves? There certainly are instances where a custom model and implementation will be called for. All challenges lots of people are looking to AI to resolve. When we think about some of the problems one would hope to solve with AI we find they're relatively common there are a lot of people trying to do the same thing. I've found this holds true, even in complex spaces like artificial intelligence. The point he was trying to highlight is whatever it is we're doing someone else (and probably somebody much smarter) has already created a solution. It can be specified in your application configuration file (config.An old co-worker of mine is fond of saying "we're not launching rockets here". Currently, double_metaphone is the only supported value for Metaphone is based on a series of rules optimized for indexing the phonetic representations of The phonetic representation of text, of which, one of the most optimized and efficient is the

There are a few common techniques that are used to generate The value corresponding to the phonetic_match_types key is a list of phonetic encoding Phonetic_match_types parameter in your entity resolution config. Mistranscriptions, you can enable phonetic matching in the entity resolver by specifying the These two terms sound similar but have little character overlap. Of entity variations that are phonetically similar but textually different from the canonical form.įor example, the entity "Arnold Schwarzenegger" may be mistranscribed to "our old shorts hanger". In addition to these variations, for voice inputs, there is a large category Variations like typos and misspellings, and it leverages synonym lists to resolve terms that are The MindMeld Entity Resolver is optimized for typed inputs by default. In the following sections, we will describe aĬouple of techniques you can leverage in MindMeld to maintain a high entity resolution accuracy However, given the cost and effort associated with building ASR models from scratch, the mostĬommon scenario is to use an out-of-the-box ASR.

There are a variety of open source models available as a starting point including This is a larger task which we won't describe here, but One way to overcome this problem is by building your own custom domain ASR system if you haveĮnough audio data available for training. Significantly different from what was said. These terms are often transcribed to tokens that are phonetically similar but textually Words or proper nouns, are likely to be mistranscribed by generic third party speech recognition However, domain-specific entities, which are generally less common This is because domain and intentĬlassification rely more on sentence structure and context words which generic speech recognition The part of the pipeline that is most susceptible to a drop inĪccuracy due to ASR errors is the entity resolution step. ASR errors may cause the text input to your MindMeld application toīe something different than what the user intended, which can lead to an unexpected response that It's important to note that speech recognition is not perfect, especially for domain-specific

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed